What is LLM API?

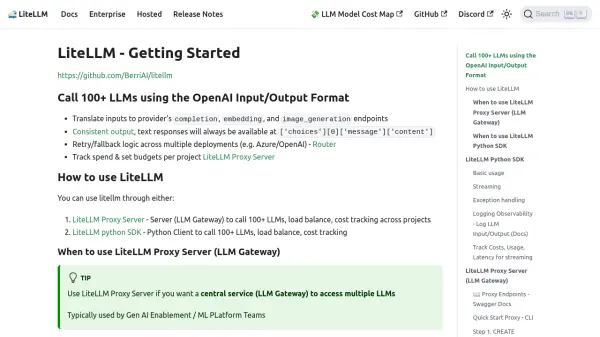

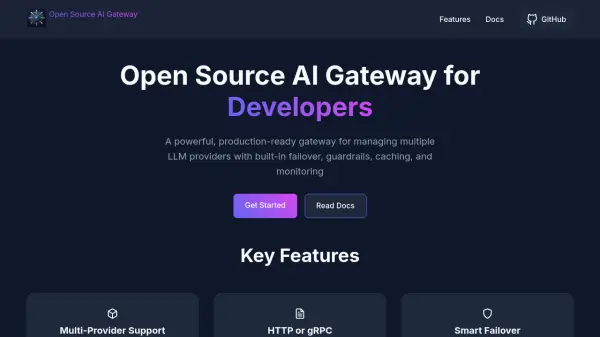

LLM API enables users to access a vast selection of over 200 advanced AI models—including models from OpenAI, Anthropic, Google, Meta, xAI, and more—via a single, unified API endpoint. This service is designed for developers and enterprises seeking streamlined integration of multiple AI capabilities without the complexity of handling separate APIs for each provider.

With compatibility for any OpenAI SDK and consistent response formats, LLM API boosts productivity by simplifying the development process. The infrastructure is scalable from prototypes to production environments, with usage-based billing for cost efficiency and 24/7 support for operational reliability. This makes LLM API a versatile solution for organizations aiming to leverage state-of-the-art language, vision, and speech models at scale.

Features

- Multi-Provider Access: Connect to 200+ AI models from leading providers through one API

- OpenAI SDK Compatibility: Easily integrates in any language as a drop-in replacement for OpenAI APIs

- Infinite Scalability: Flexible infrastructure supporting usage from prototype to enterprise-scale applications

- Unified Response Formats: Simplifies integration with consistent API responses across all models

- Usage-Based Billing: Only pay for the AI resources you consume

- 24/7 Support: Continuous assistance ensures platform reliability

Use Cases

- Deploying generative AI chatbots across various business platforms

- Integrating language translation, summarization, and text analysis into applications

- Accessing vision and speech recognition models for transcription and multimedia analysis

- Building educational or research tools leveraging multiple AI models

- Testing and benchmarking different foundation models without individual integrations

FAQs

-

How is pricing calculated?

Pricing is calculated based on actual usage of API resources for the AI models accessed through LLM API. -

What payment methods do you support?

Support for payment methods is detailed during account setup; users can select from standard payment options. -

How can I get support?

Support is available 24/7 via the LLM API platform, ensuring users can resolve technical or billing issues at any time. -

How is usage billed on LLM API?

Usage is billed according to the consumption of AI model calls, allowing users to pay only for what they utilize.