LLM monitoring tools for developers - AI tools

-

Laminar The AI engineering platform for LLM products

Laminar The AI engineering platform for LLM productsLaminar is an open-source platform that enables developers to trace, evaluate, label, and analyze Large Language Model (LLM) applications with minimal code integration.

- Freemium

- From 25$

-

Libretto LLM Monitoring, Testing, and Optimization

Libretto LLM Monitoring, Testing, and OptimizationLibretto offers comprehensive LLM monitoring, automated prompt testing, and optimization tools to ensure the reliability and performance of your AI applications.

- Freemium

- From 180$

-

Literal AI Ship reliable LLM Products

Literal AI Ship reliable LLM ProductsLiteral AI streamlines the development of LLM applications, offering tools for evaluation, prompt management, logging, monitoring, and more to build production-grade AI products.

- Freemium

-

Keywords AI LLM monitoring for AI startups

Keywords AI LLM monitoring for AI startupsKeywords AI is a comprehensive developer platform for LLM applications, offering monitoring, debugging, and deployment tools. It serves as a Datadog-like solution specifically designed for LLM applications.

- Freemium

- From 7$

-

BenchLLM The best way to evaluate LLM-powered apps

BenchLLM The best way to evaluate LLM-powered appsBenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

- Other

-

Siloam AI Advanced LLM monitoring and analytics for AI-powered applications.

Siloam AI Advanced LLM monitoring and analytics for AI-powered applications.Siloam AI provides comprehensive observability tools for Large Language Model applications, offering real-time monitoring, AI-powered analysis, and optimization features to help developers build better AI products.

- Freemium

- From 10$

-

Hegel AI Developer Platform for Large Language Model (LLM) Applications

Hegel AI Developer Platform for Large Language Model (LLM) ApplicationsHegel AI provides a developer platform for building, monitoring, and improving large language model (LLM) applications, featuring tools for experimentation, evaluation, and feedback integration.

- Contact for Pricing

-

Helicone Ship your AI app with confidence

Helicone Ship your AI app with confidenceHelicone is an all-in-one platform for monitoring, debugging, and improving production-ready LLM applications. It provides tools for logging, evaluating, experimenting, and deploying AI applications.

- Freemium

- From 20$

-

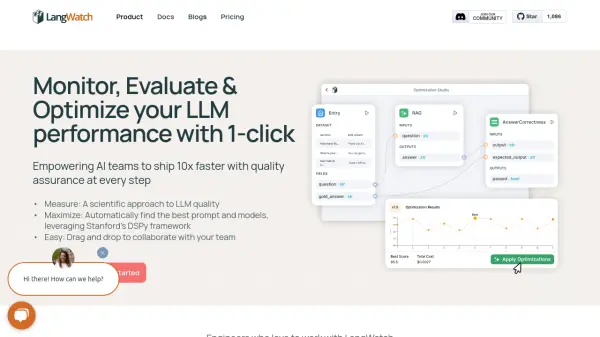

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-click

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-clickLangWatch empowers AI teams to ship 10x faster with quality assurance at every step. It provides tools to measure, maximize, and easily collaborate on LLM performance.

- Paid

- From 59$

-

Conviction The Platform to Evaluate & Test LLMs

Conviction The Platform to Evaluate & Test LLMsConviction is an AI platform designed for evaluating, testing, and monitoring Large Language Models (LLMs) to help developers build reliable AI applications faster. It focuses on detecting hallucinations, optimizing prompts, and ensuring security.

- Freemium

- From 249$

-

Langfuse Open Source LLM Engineering Platform

Langfuse Open Source LLM Engineering PlatformLangfuse provides an open-source platform for tracing, evaluating, and managing prompts to debug and improve LLM applications.

- Freemium

- From 59$

-

phoenix.arize.com Open-source LLM tracing and evaluation

phoenix.arize.com Open-source LLM tracing and evaluationPhoenix accelerates AI development with powerful insights, allowing seamless evaluation, experimentation, and optimization of AI applications in real time.

- Freemium

-

Braintrust The end-to-end platform for building world-class AI apps.

Braintrust The end-to-end platform for building world-class AI apps.Braintrust provides an end-to-end platform for developing, evaluating, and monitoring Large Language Model (LLM) applications. It helps teams build robust AI products through iterative workflows and real-time analysis.

- Freemium

- From 249$

-

Gentrace Intuitive evals for intelligent applications

Gentrace Intuitive evals for intelligent applicationsGentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

- Usage Based

-

W&B Weave A Framework for Developing and Deploying LLM-Based Applications

W&B Weave A Framework for Developing and Deploying LLM-Based ApplicationsWeights & Biases (W&B) Weave is a comprehensive framework designed for tracking, experimenting with, evaluating, deploying, and enhancing LLM-based applications.

- Other

-

LLMMM Monitor how LLMs perceive your brand

LLMMM Monitor how LLMs perceive your brandLLMMM helps brands track their presence in leading AI models like ChatGPT, Gemini, and Meta AI, providing real-time monitoring and brand safety insights.

- Free

-

OpenLIT Open Source Platform for AI Engineering

OpenLIT Open Source Platform for AI EngineeringOpenLIT is an open-source observability platform designed to streamline AI development workflows, particularly for Generative AI and LLMs, offering features like prompt management, performance tracking, and secure secrets management.

- Other

-

Parea Test and Evaluate your AI systems

Parea Test and Evaluate your AI systemsParea is a platform for testing, evaluating, and monitoring Large Language Model (LLM) applications, helping teams track experiments, collect human feedback, and deploy prompts confidently.

- Freemium

- From 150$

-

LiteLLM Unified API Gateway for 100+ LLM Providers

LiteLLM Unified API Gateway for 100+ LLM ProvidersLiteLLM is a comprehensive LLM gateway solution that provides unified API management, authentication, load balancing, and spend tracking across multiple LLM providers including Azure OpenAI, Vertex AI, Bedrock, and OpenAI.

- Freemium

Featured Tools

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Explore More

-

AI Amazon repricer tool 12 tools

-

TikTok affiliate marketing platform 9 tools

-

High-speed web crawler for AI 21 tools

-

business automation for document processing 13 tools

-

Personalized text to speech 27 tools

-

customer support chatbot for website 25 tools

-

interactive content builder for marketers 60 tools

-

mood tracking with AI insights 42 tools

-

eCommerce solutions for product drops 12 tools

Didn't find tool you were looking for?