Multi-Model Advisor

A Model Context Protocol server combining multiple AI model perspectives for richer advice.

Key Features

Use Cases

README

Multi-Model Advisor

(锵锵四人行)

A Model Context Protocol (MCP) server that queries multiple Ollama models and combines their responses, providing diverse AI perspectives on a single question. This creates a "council of advisors" approach where Claude can synthesize multiple viewpoints alongside its own to provide more comprehensive answers.

graph TD

A[Start] --> B[Worker Local AI 1 Opinion]

A --> C[Worker Local AI 2 Opinion]

A --> D[Worker Local AI 3 Opinion]

B --> E[Manager AI]

C --> E

D --> E

E --> F[Decision Made]

Features

- Query multiple Ollama models with a single question

- Assign different roles/personas to each model

- View all available Ollama models on your system

- Customize system prompts for each model

- Configure via environment variables

- Integrate seamlessly with Claude for Desktop

Prerequisites

- Node.js 16.x or higher

- Ollama installed and running (see Ollama installation)

- Claude for Desktop (for the complete advisory experience)

Installation

Installing via Smithery

To install multi-ai-advisor-mcp for Claude Desktop automatically via Smithery:

npx -y @smithery/cli install @YuChenSSR/multi-ai-advisor-mcp --client claude

Manual Installation

-

Clone this repository:

bashgit clone https://github.com/YuChenSSR/multi-ai-advisor-mcp.git cd multi-ai-advisor-mcp -

Install dependencies:

bashnpm install -

Build the project:

bashnpm run build -

Install required Ollama models:

bashollama pull gemma3:1b ollama pull llama3.2:1b ollama pull deepseek-r1:1.5b

Configuration

Create a .env file in the project root with your desired configuration:

# Server configuration

SERVER_NAME=multi-model-advisor

SERVER_VERSION=1.0.0

DEBUG=true

# Ollama configuration

OLLAMA_API_URL=http://localhost:11434

DEFAULT_MODELS=gemma3:1b,llama3.2:1b,deepseek-r1:1.5b

# System prompts for each model

GEMMA_SYSTEM_PROMPT=You are a creative and innovative AI assistant. Think outside the box and offer novel perspectives.

LLAMA_SYSTEM_PROMPT=You are a supportive and empathetic AI assistant focused on human well-being. Provide considerate and balanced advice.

DEEPSEEK_SYSTEM_PROMPT=You are a logical and analytical AI assistant. Think step-by-step and explain your reasoning clearly.

Connect to Claude for Desktop

-

Locate your Claude for Desktop configuration file:

- MacOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

- MacOS:

-

Edit the file to add the Multi-Model Advisor MCP server:

{

"mcpServers": {

"multi-model-advisor": {

"command": "node",

"args": ["/absolute/path/to/multi-ai-advisor-mcp/build/index.js"]

}

}

}

-

Replace

/absolute/path/to/with the actual path to your project directory -

Restart Claude for Desktop

Usage

Once connected to Claude for Desktop, you can use the Multi-Model Advisor in several ways:

List Available Models

You can see all available models on your system:

Show me which Ollama models are available on my system

This will display all installed Ollama models and indicate which ones are configured as defaults.

Basic Usage

Simply ask Claude to use the multi-model advisor:

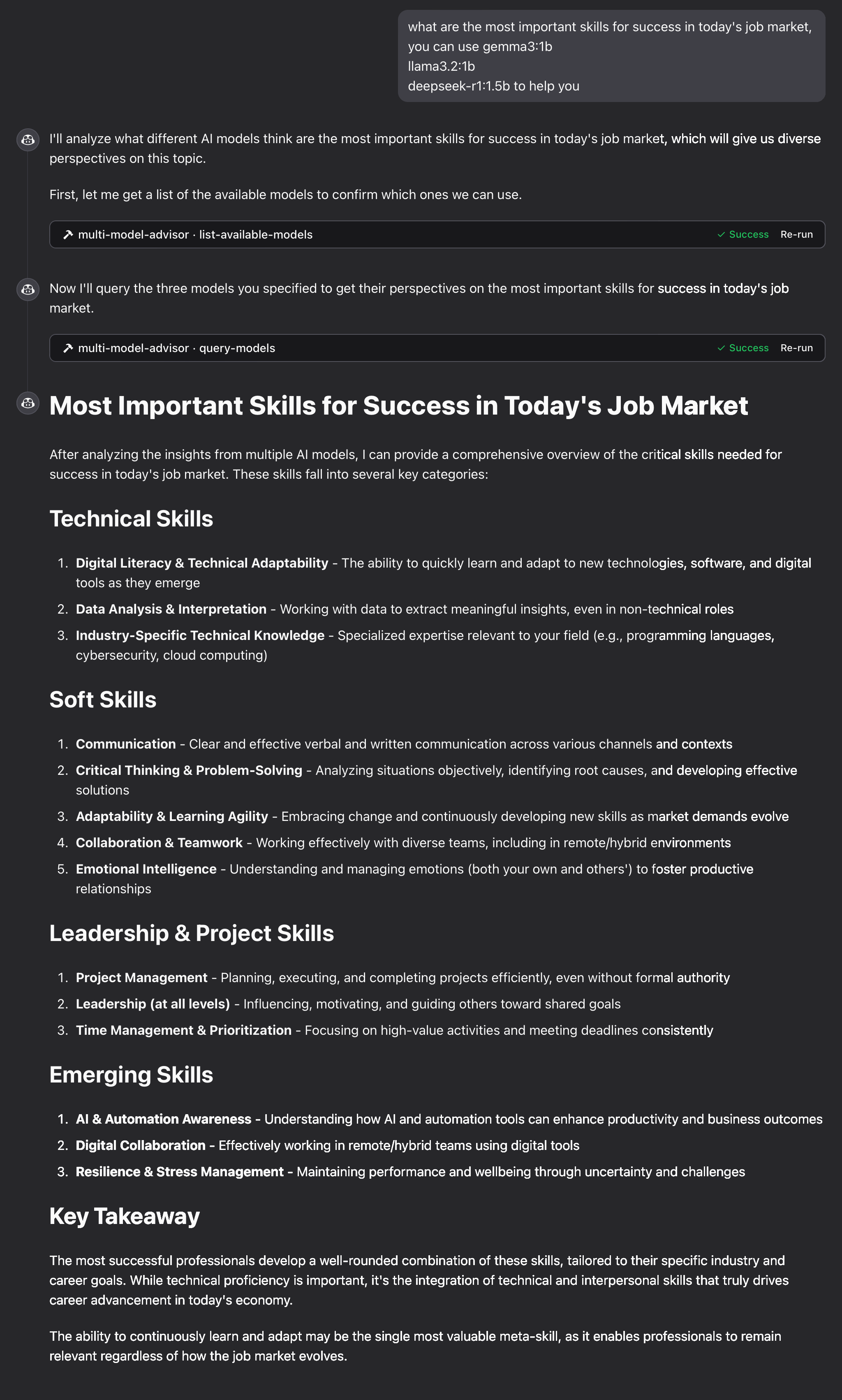

what are the most important skills for success in today's job market,

you can use gemma3:1b, llama3.2:1b, deepseek-r1:1.5b to help you

Claude will query all default models and provide a synthesized response based on their different perspectives.

How It Works

-

The MCP server exposes two tools:

list-available-models: Shows all Ollama models on your systemquery-models: Queries multiple models with a question

-

When you ask Claude a question referring to the multi-model advisor:

- Claude decides to use the

query-modelstool - The server sends your question to multiple Ollama models

- Each model responds with its perspective

- Claude receives all responses and synthesizes a comprehensive answer

- Claude decides to use the

-

Each model can have a different "persona" or role assigned, encouraging diverse perspectives.

Troubleshooting

Ollama Connection Issues

If the server can't connect to Ollama:

- Ensure Ollama is running (

ollama serve) - Check that the OLLAMA_API_URL is correct in your .env file

- Try accessing http://localhost:11434 in your browser to verify Ollama is responding

Model Not Found

If a model is reported as unavailable:

- Check that you've pulled the model using

ollama pull <model-name> - Verify the exact model name using

ollama list - Use the

list-available-modelstool to see all available models

Claude Not Showing MCP Tools

If the tools don't appear in Claude:

- Ensure you've restarted Claude after updating the configuration

- Check the absolute path in claude_desktop_config.json is correct

- Look at Claude's logs for error messages

RAM is not enough

Some managers' AI models may have chosen larger models, but there is not enough memory to run them. You can try specifying a smaller model (see the Basic Usage) or upgrading the memory.

License

MIT License

For more details, please see the LICENSE file in this project repository

Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

Star History

Repository Owner

User

Repository Details

Programming Languages

Tags

Topics

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Related MCPs

Discover similar Model Context Protocol servers

MCP OpenAI Server

Seamlessly connect OpenAI's models to Claude via Model Context Protocol.

MCP OpenAI Server acts as a Model Context Protocol (MCP) bridge allowing Claude Desktop to access and interact with multiple OpenAI chat models. It enables users to leverage models such as GPT-4o and O1 directly from Claude using a straightforward message-passing interface. The server supports easy integration through configuration and provides basic error handling. Designed for use with Node.js and requiring an OpenAI API key, it is tailored for macOS with support for other platforms in progress.

- ⭐ 69

- MCP

- mzxrai/mcp-openai

Unichat MCP Server

Universal MCP server providing context-aware AI chat and code tools across major model vendors.

Unichat MCP Server enables sending standardized requests to leading AI model vendors, including OpenAI, MistralAI, Anthropic, xAI, Google AI, DeepSeek, Alibaba, and Inception, utilizing the Model Context Protocol. It features unified endpoints for chat interactions and provides specialized tools for code review, documentation generation, code explanation, and programmatic code reworking. The server is designed for seamless integration with platforms like Claude Desktop and installation via Smithery. Vendor API keys are required for secure access to supported providers.

- ⭐ 37

- MCP

- amidabuddha/unichat-mcp-server

Cross-LLM MCP Server

Unified MCP server for accessing and combining multiple LLM APIs.

Cross-LLM MCP Server is a Model Context Protocol (MCP) server enabling seamless access to a range of Large Language Model APIs including ChatGPT, Claude, DeepSeek, Gemini, Grok, Kimi, Perplexity, and Mistral. It provides a unified interface for invoking different LLMs from any MCP-compatible client, allowing users to call and aggregate responses across providers. The server implements eight specialized tools for interacting with these LLMs, each offering configurable options like model selection, temperature, and token limits. Output includes model context details as well as token usage statistics for each response.

- ⭐ 9

- MCP

- JamesANZ/cross-llm-mcp

LlamaCloud MCP Server

Connect multiple LlamaCloud indexes as tools for your MCP client.

LlamaCloud MCP Server is a TypeScript-based implementation of a Model Context Protocol server that allows users to connect multiple managed indexes from LlamaCloud as separate tools in MCP-compatible clients. Each tool is defined via command-line parameters, enabling flexible and dynamic access to different document indexes. The server automatically generates tool interfaces, each capable of querying its respective LlamaCloud index, with customizable parameters such as index name, description, and result limits. Designed for seamless integration, it works with clients like Claude Desktop, Windsurf, and Cursor.

- ⭐ 82

- MCP

- run-llama/mcp-server-llamacloud

any-chat-completions-mcp

Integrate multiple AI chat providers with OpenAI-compatible MCP server.

any-chat-completions-mcp is a TypeScript-based server implementing the Model Context Protocol (MCP) to connect popular AI chat providers like OpenAI, Perplexity, Groq, xAI, and PyroPrompts via a unified interface. It relays chat/completion requests to any OpenAI SDK-compatible API, allowing users to easily access multiple AI services through the same standardized protocol. The server can be configured for different providers by setting environment variables and integrates with both Claude Desktop and LibreChat.

- ⭐ 143

- MCP

- pyroprompts/any-chat-completions-mcp

MCP CLI

A powerful CLI for seamless interaction with Model Context Protocol servers and advanced LLMs.

MCP CLI is a modular command-line interface designed for interacting with Model Context Protocol (MCP) servers and managing conversations with large language models. It integrates with the CHUK Tool Processor and CHUK-LLM to provide real-time chat, interactive command shells, and automation capabilities. The system supports a wide array of AI providers and models, advanced tool usage, context management, and performance metrics. Rich output formatting, concurrent tool execution, and flexible configuration make it suitable for both end-users and developers.

- ⭐ 1,755

- MCP

- chrishayuk/mcp-cli

Didn't find tool you were looking for?