Open Data Model Context Protocol

Easily connect open data providers to LLMs using a Model Context Protocol server and CLI.

Key Features

Use Cases

README

Open Data Model Context Protocol

See it in action

https://github.com/user-attachments/assets/760e1a16-add6-49a1-bf71-dfbb335e893e

We enable 2 things:

- Open Data Access: Access to many public datasets right from your LLM application (starting with Claude, more to come).

- Publishing: Get community help and a distribution network to distribute your Open Data. Get everyone to use it!

How do we do that?

- Access: Setup our MCP servers in your LLM application in 2 clicks via our CLI tool (starting with Claude, see Roadmap for next steps).

- Publish: Use provided templates and guidelines to quickly contribute and publish on Open Data MCP. Make your data easily discoverable!

Usage

Access: Access Open Data using Open Data MCP CLI Tool

Prerequisites

If you want to use Open Data MCP with Claude Desktop app client you need to install the Claude Desktop app.

You will also need uv to easily run our CLI and MCP servers.

macOS

# you need to install uv through homebrew as using the install shell script

# will install it locally to your user which make it unavailable in the Claude Desktop app context.

brew install uv

Windows

# (UNTESTED)

powershell -ExecutionPolicy ByPass -c "irm https://astral.sh/uv/install.ps1 | iex"

Open Data MCP - CLI Tool

Overview

# show available commands

uvx odmcp

# show available providers

uvx odmcp list

# show info about a provider

uvx odmcp info $PROVIDER_NAME

# setup a provider's MCP server on your Claude Desktop app

uvx odmcp setup $PROVIDER_NAME

# remove a provider's MCP server from your Claude Desktop app

uvx odmcp remove $PROVIDER_NAME

Example

Quickstart for the Switzerland SBB (train company) provider:

# make sure claude is installed

uvx odmcp setup ch_sbb

Restart Claude and you should see a new hammer icon at the bottom right of the chat.

You can now ask questions to Claude about SBB train network disruption and it will answer based on data collected on data.sbb.ch.

Publish: Contribute by building and publishing public datasets

Prerequisites

-

Install UV Package Manager

bash# macOS brew install uv # Windows (PowerShell) powershell -ExecutionPolicy ByPass -c "irm https://astral.sh/uv/install.ps1 | iex" # Linux/WSL curl -LsSf https://astral.sh/uv/install.sh | sh -

Clone & Setup Repository

bash# Clone the repository git clone https://github.com/OpenDataMCP/OpenDataMCP.git cd OpenDataMCP # Create and activate virtual environment uv venv source .venv/bin/activate # Unix/macOS # or .venv\Scripts\activate # Windows # Install dependencies uv sync -

Install Pre-commit Hooks

bash# Install pre-commit hooks for code quality pre-commit install

Publishing Instructions

-

Create a New Provider Module

- Each data source needs its own python module.

- Create a new Python module in

src/odmcp/providers/. - Use a descriptive name following the pattern:

{country_code}_{organization}.py(e.g.,ch_sbb.py). - Start with our template file as your base.

-

Implement Required Components

- Define your Tools & Resources following the template structure

- Each Tool or Resource should have:

- Clear description of its purpose

- Well-defined input/output schemas using Pydantic models

- Proper error handling

- Documentation strings

-

Tool vs Resource

- Choose Tool implementation if your data needs:

- Active querying or computation

- Parameter-based filtering

- Complex transformations

- Choose Resource implementation if your data is:

- Static or rarely changing

- Small enough to be loaded into memory

- Simple file-based content

- Reference documentation or lookup tables

- Reference the MCP documentation for guidance

- Choose Tool implementation if your data needs:

-

Testing

- Add tests in the

tests/directory - Follow existing test patterns (see other provider tests)

- Required test coverage:

- Basic functionality

- Edge cases

- Error handling

- Add tests in the

-

Validation

- Test your MCP server using our experimental client:

uv run src/odmcp/providers/client.py - Verify all endpoints respond correctly

- Ensure error messages are helpful

- Check performance with typical query loads

- Test your MCP server using our experimental client:

For other examples, check our existing providers in the src/odmcp/providers/ directory.

Contributing

We have an ambitious roadmap and we want this project to scale with the community. The ultimate goal is to make the millions of datasets publicly available to all LLM applications.

For that we need your help!

Discord

We want to build a helping community around the challenge of bringing open data to LLM's. Join us on discord to start chatting: https://discord.gg/QPFFZWKW

Our Core Guidelines

Because of our target scale we want to keep things simple and pragmatic at first. Tackle issues with the community as they come along.

-

Simplicity and Maintainability

- Minimize abstractions to keep codebase simple and scalable

- Focus on clear, straightforward implementations

- Avoid unnecessary complexity

-

Standardization / Templates

- Follow provided templates and guidelines consistently

- Maintain uniform structure across providers

- Use common patterns for similar functionality

-

Dependencies

- Keep external dependencies to a minimum

- Prioritize single repository/package setup

- Carefully evaluate necessity of new dependencies

-

Code Quality

- Format code using ruff

- Maintain comprehensive test coverage with pytest

- Follow consistent code style

-

Type Safety

- Use Python type hints throughout

- Leverage Pydantic models for API request/response validation

- Ensure type safety in data handling

Tactical Topics (our current priorities)

- Initialize repository with guidelines, testing framework, and contribution workflow

- Implement CI/CD pipeline with automated PyPI releases

- Develop provider template and first reference implementation

- Integrate additional open datasets (actively seeking contributors)

- Establish clear guidelines for choosing between Resources and Tools

- Develop scalable repository architecture for long-term growth

- Expand MCP SDK parameter support (authentication, rate limiting, etc.)

- Implement additional MCP protocol features (prompts, resource templates)

- Add support for alternative transport protocols beyond stdio (SSE)

- Deploy hosted MCP servers for improved accessibility

Roadmap

Let’s build the open source infrastructure that will allow all LLMs to access all Open Data together!

Access:

- Make Open Data available to all LLM applications (beyond Claude)

- Make Open Data data sources searchable in a scalable way

- Make Open Data available through MCP remotely (SSE) with publicly sponsored infrastructure

Publish:

- Build the many Open Data MCP servers to make all the Open Data truly accessible (we need you!).

- On our side we are starting to build MCP servers for Switzerland ~12k open dataset!

- Make it even easier to build Open Data MCP servers

We are very early, and lack of dataset available is currently the bottleneck. Help yourself! Create your Open Data MCP server and get users to use it as well from their LLMs applications. Let’s connect LLMs to the millions of open datasets from governments, public entities, companies and NGOs!

As Anthropic's MCP evolves we will adapt and upgrade Open Data MCP.

Limitations

- All data served by Open Data MCP servers should be Open.

- Please oblige to the data licenses of the data providers.

- Our License must be quoted in commercial applications.

References

- Kudos to Anthropic's open source MCP release enabling initiative like this one.

License

This project is licensed under the MIT License - see the LICENSE file for details

Star History

Repository Owner

Organization

Repository Details

Programming Languages

Tags

Topics

Join Our Newsletter

Stay updated with the latest AI tools, news, and offers by subscribing to our weekly newsletter.

Related MCPs

Discover similar Model Context Protocol servers

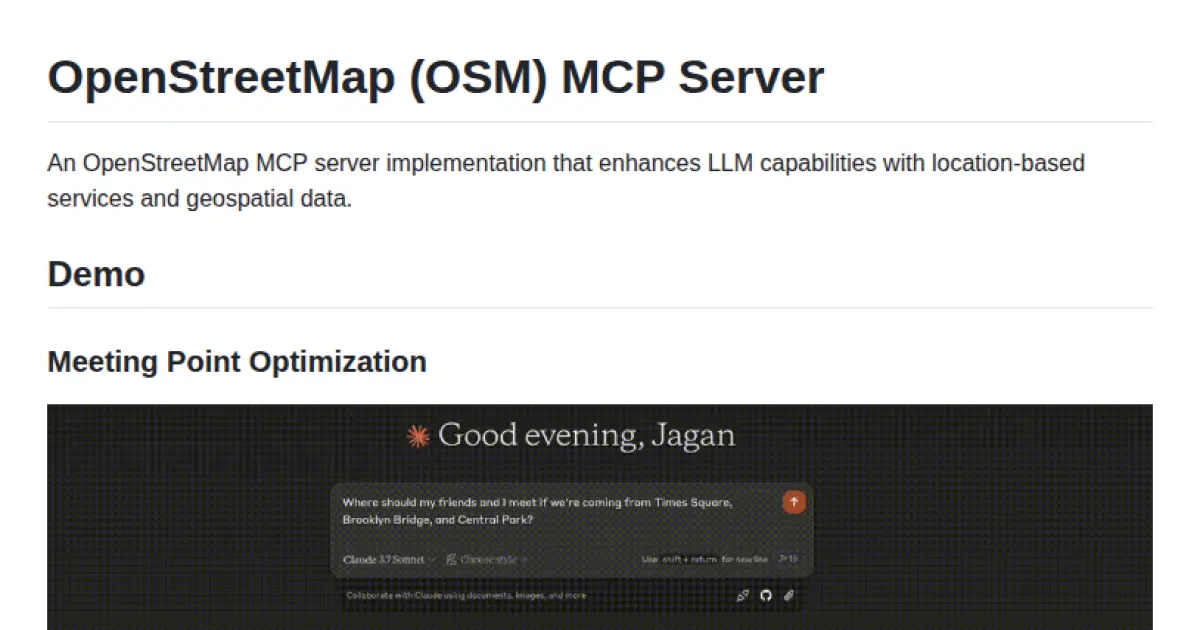

OpenStreetMap MCP Server

Enhancing LLMs with geospatial and location-based capabilities via the Model Context Protocol.

OpenStreetMap MCP Server enables large language models to interact with rich geospatial data and location-based services through a standardized protocol. It provides APIs and tools for address geocoding, reverse geocoding, points of interest search, route directions, and neighborhood analysis. The server exposes location-related resources and tools, making it compatible with MCP hosts for seamless LLM integration.

- ⭐ 134

- MCP

- jagan-shanmugam/open-streetmap-mcp

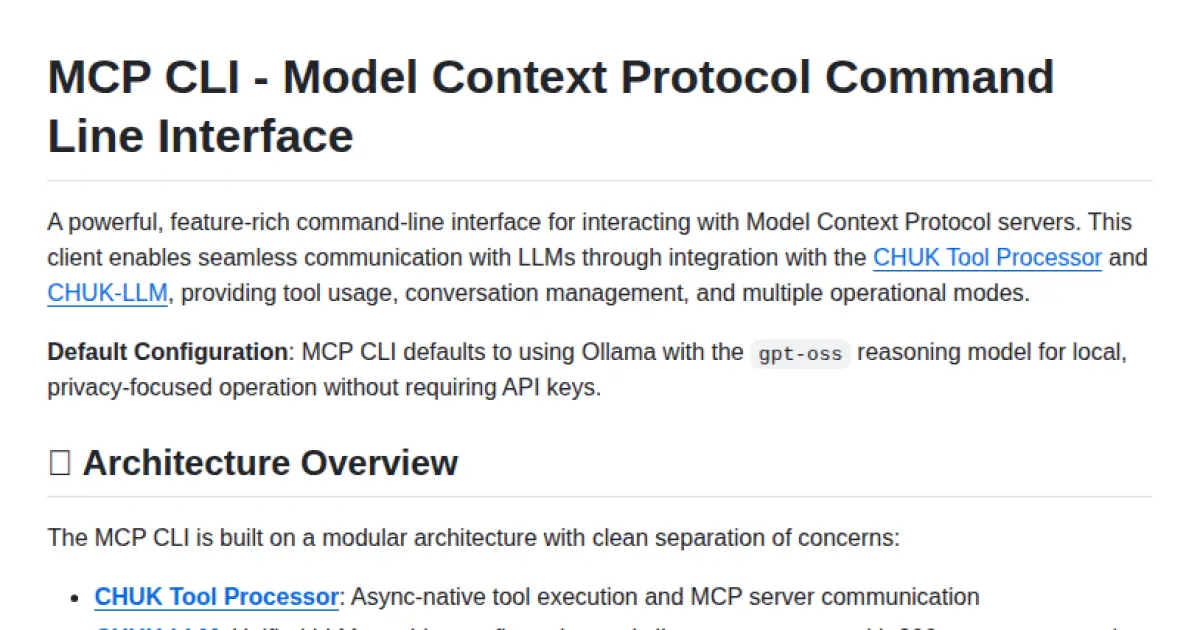

MCP CLI

A powerful CLI for seamless interaction with Model Context Protocol servers and advanced LLMs.

MCP CLI is a modular command-line interface designed for interacting with Model Context Protocol (MCP) servers and managing conversations with large language models. It integrates with the CHUK Tool Processor and CHUK-LLM to provide real-time chat, interactive command shells, and automation capabilities. The system supports a wide array of AI providers and models, advanced tool usage, context management, and performance metrics. Rich output formatting, concurrent tool execution, and flexible configuration make it suitable for both end-users and developers.

- ⭐ 1,755

- MCP

- chrishayuk/mcp-cli

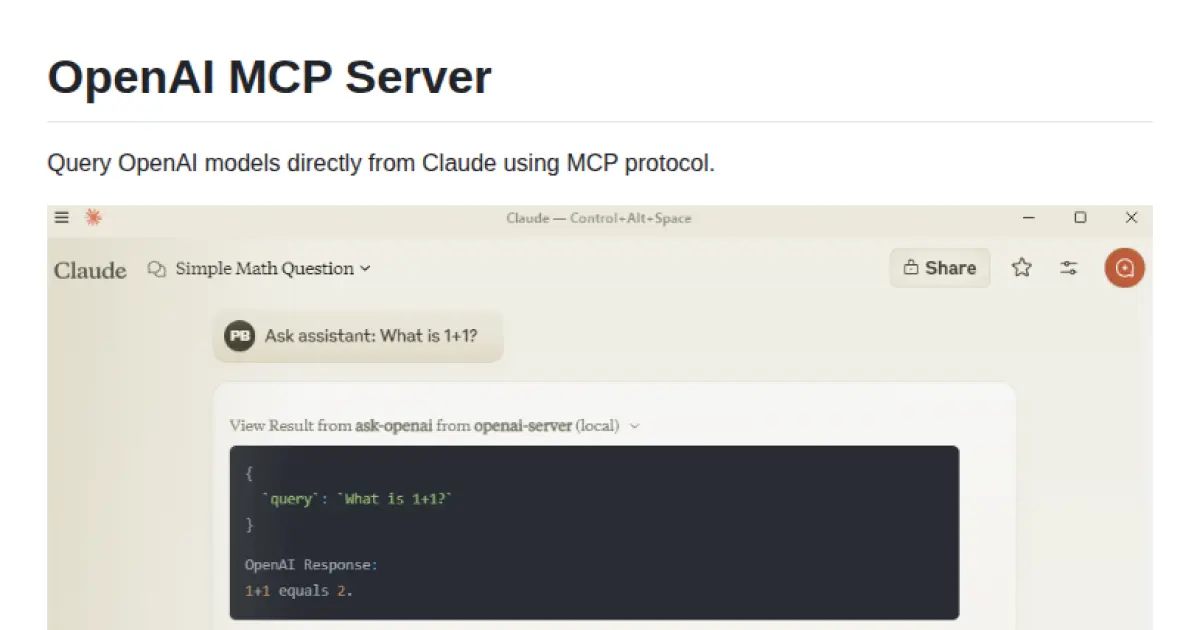

OpenAI MCP Server

Bridge between Claude and OpenAI models using the MCP protocol.

OpenAI MCP Server enables direct querying of OpenAI language models from Claude via the Model Context Protocol (MCP). It provides a configurable Python server that exposes OpenAI APIs as MCP endpoints. The server is designed for seamless integration, requiring simple configuration updates and environment variable setup. Automated testing is supported to verify connectivity and response from the OpenAI API.

- ⭐ 77

- MCP

- pierrebrunelle/mcp-server-openai

mcp-get

A command-line tool for discovering, installing, and managing Model Context Protocol servers.

mcp-get is a CLI tool designed to help users discover, install, and manage Model Context Protocol (MCP) servers. It enables seamless integration of Large Language Models (LLMs) with various external data sources and tools by utilizing a standardized protocol. The tool provides access to a curated registry of MCP servers and supports installation and management across multiple programming languages and environments. Although now archived, mcp-get simplifies environment variable management, package versioning, and server updates to enhance the LLM ecosystem.

- ⭐ 497

- MCP

- michaellatman/mcp-get

MCP Server for Odoo

Connect AI assistants to Odoo ERP systems using the Model Context Protocol.

MCP Server for Odoo enables AI assistants such as Claude to interact seamlessly with Odoo ERP systems via the Model Context Protocol (MCP). It provides endpoints for searching, creating, updating, and deleting Odoo records using natural language while respecting access controls and security. The server supports integration with any Odoo instance, includes smart features like pagination and LLM-optimized output, and offers both demo and production-ready modes.

- ⭐ 101

- MCP

- ivnvxd/mcp-server-odoo

YDB MCP

MCP server for AI-powered natural language database operations on YDB.

YDB MCP acts as a Model Context Protocol server enabling YDB databases to be accessed via any LLM supporting MCP. It allows AI-driven and natural language interaction with YDB instances by bridging database operations with language model interfaces. Flexible deployment through uvx, pipx, or pip is supported, along with multiple authentication methods. The integration empowers users to manage YDB databases conversationally through standardized protocols.

- ⭐ 24

- MCP

- ydb-platform/ydb-mcp

Didn't find tool you were looking for?