GPT–LLM Playground Uptime Monitor

Your Comprehensive Testing Environment for Language Learning Models

Last 30 Days Performance

Average Uptime

0%

Based on 30-day monitoring period

Average Response Time

0ms

Mean response time across all checks

Daily Status Overview

Hover for detailsHistorical Performance

Jan-2026

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Dec-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Nov-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Oct-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Sep-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Aug-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Jul-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Jun-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Related Uptime Monitors

Explore uptime status for similar tools that also have monitoring enabled.

-

Operational

OperationalBenchLLM

The best way to evaluate LLM-powered apps

BenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

Last checked: 1 month ago View Status -

Operational

OperationalPromptsLabs

A Library of Prompts for Testing LLMs

PromptsLabs is a community-driven platform providing copy-paste prompts to test the performance of new LLMs. Explore and contribute to a growing collection of prompts.

Last checked: 1 month ago View Status -

Issues

IssuesCompare AI Models

AI Model Comparison Tool

Compare AI Models is a platform providing comprehensive comparisons and insights into various large language models, including GPT-4o, Claude, Llama, and Mistral.

Last checked: 1 month ago View Status -

Operational

OperationalOppenheimerGPT

Compare Leading AI Models Side-by-Side

OppenheimerGPT is a macOS app that allows users to compare responses from top AI models like Gemini and ChatGPT simultaneously. Enhance your prompting experience and determine the best AI for your needs.

Last checked: 1 month ago View Status -

Operational

OperationalPromptMage

A Python framework for simplified LLM-based application development

PromptMage is a Python framework that streamlines the development of complex, multi-step applications powered by Large Language Models (LLMs), offering version control, testing capabilities, and automated API generation.

Last checked: 1 month ago View Status -

Operational

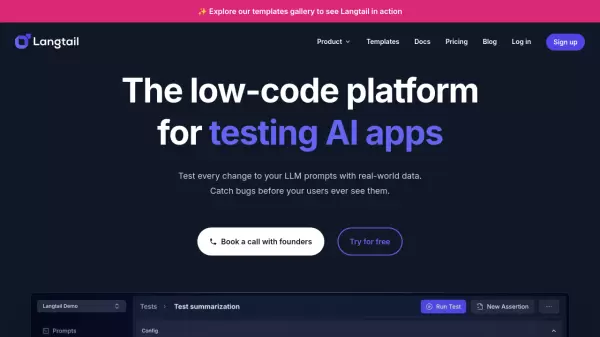

OperationalLangtail

The low-code platform for testing AI apps

Langtail is a comprehensive testing platform that enables teams to test and debug LLM-powered applications with a spreadsheet-like interface, offering security features and integration with major LLM providers.

Last checked: 1 month ago View Status